Set up and maintain Exceptional Alerts

Networks are not perfect; mistakes get shipped; your code, integrations, and users will surprise you. Testing in Production is your safety net. Keeping a good eye on your Live system is key to supporting your applications and users.

Monitors work like test automation on your live system. Set your expectations and they will keep checking, alerting the team when the expectation fails.

But just like your test-automation suites, these can become a source of problems if you don't set them up well and treat them right.

I'll show how I've learnt to use alerts effectively, and how I make this a team-focused activity.

Building Monitoring

The fewer the better, but not too few

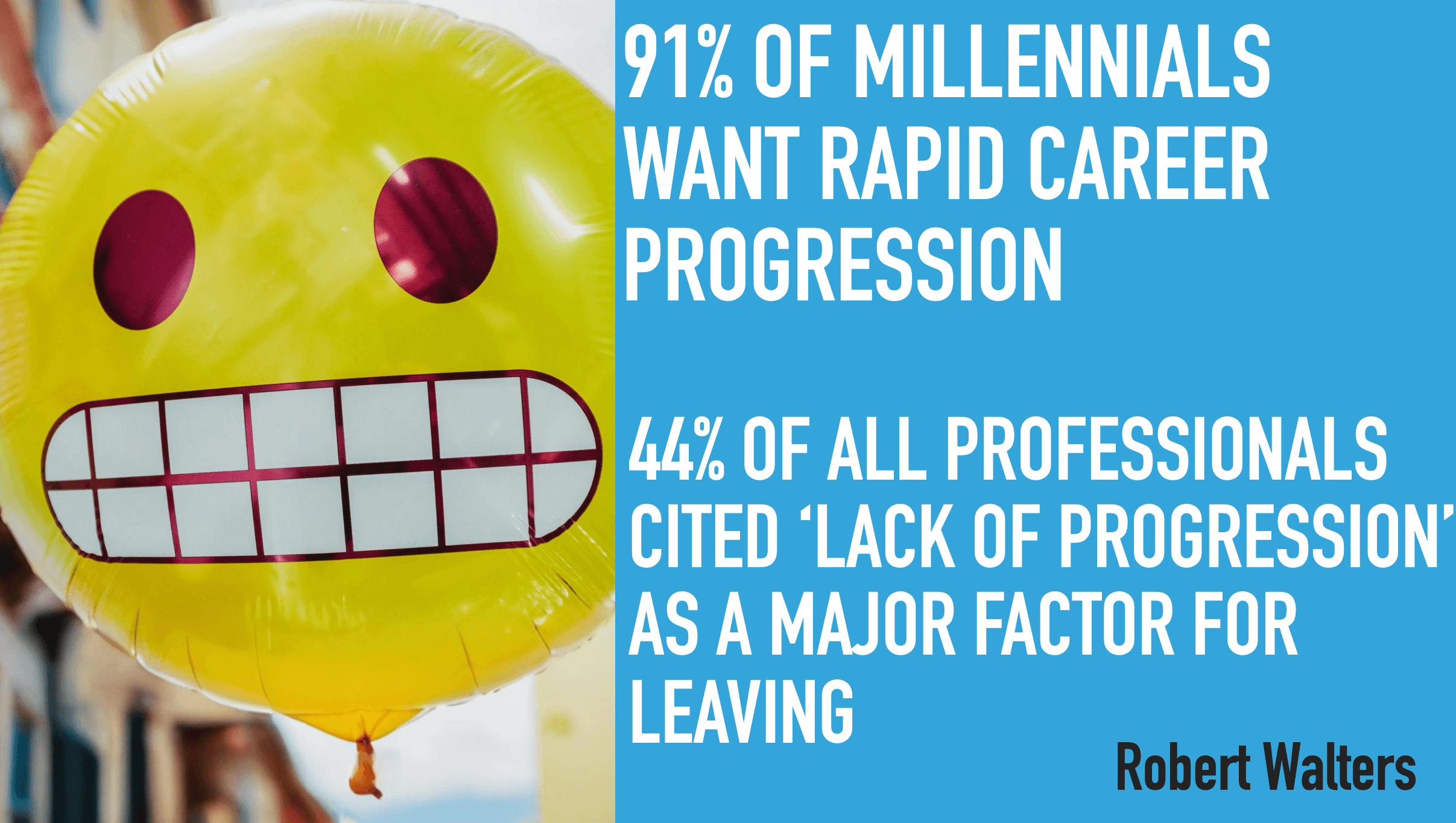

Monitoring systems often have access to a lot of different data sets. HTTP status codes, throughput times and, higher-level application errors and events. There are a lot of options and it can be tempting to monitor all the things.

Too many monitors will likely lead to noise, distractions and a pile of maintenance hassle.

It's worth taking a little time to work out what's important and start your monitoring there.

What would be key indicators of the system failing your users? How would you tell if the product is not doing its key jobs? What can't you afford to fail?

A few general catch-all monitors are also very useful. I like to keep an eye on the 5xx and 4xx status codes and alert on anomalies. However, it's important to not have these raising false alarms.

Alerts should not cry wolf

An alert should be there to highlight the unexpected and unwanted. They are your tests-in-production and their expectations need to be set at a level to not be flakey or noisy.

In your early days, it can take a short while to get this right. You might need to tune your alerts so they don't treat the normal as exceptional. Monitoring services that offer ways to use historic data to spot abnormalities can be a great shortcut.

Alerts should communicate well

We shouldn't expect everyone on your team to be experts in every part of the system or remember why an alert got set up.

We need to leave notes for the future in our monitor set-up so we are reminded what's important about this alert when it triggers.

For clear action to be taken, an alert needs to be clear about:

- What has happened, and how critical it is

- What sources of information will help

- What should be done next

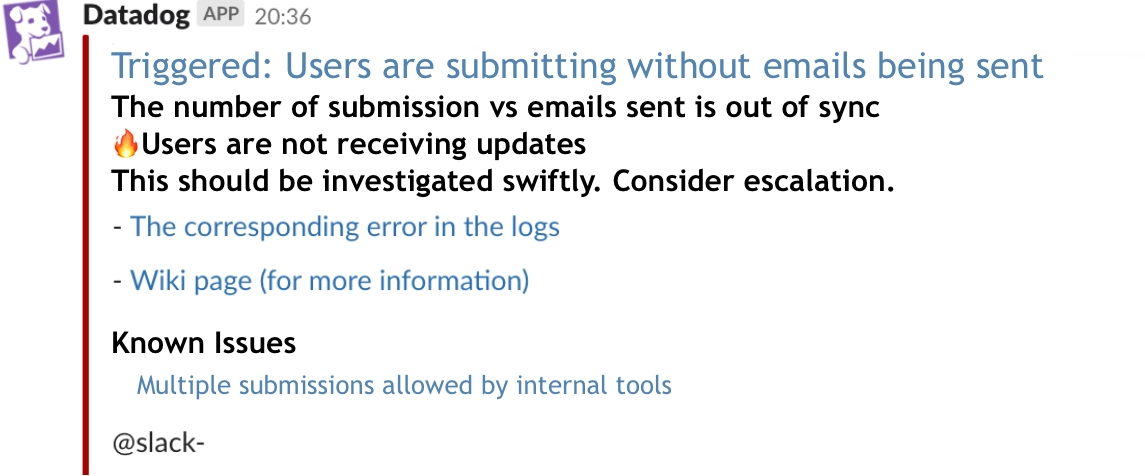

The example below first makes a statement of what the alert is about. It then links to logs to get more information.

Critically it has a call to action, making clear who it might affect and what we might need to do.

Maintaining Monitoring

Alerts need tending

Alerts can be like a garden. Untended, they can overgrow their space and crowd out what's important. Alerts that have fulfilled their purpose need grubbing up. Those taking up too much space need pruning; confusing alerts need weeding out.

When you have a live issue, it's well worth evaluating your monitoring and tuning it so it's telling you the right thing at the right time.

- Did an alert call out the issue in a timely manner?

- Were there alerts ignored until there clearly is an issue?

- Do alerts you no longer care about hide the ones you do?

If you can answer yes to one or more of these, you should review your monitors, and make them right for the team. Don't let a critical issue get crowded out of your plot.

Alerts are a call to action: fix the root causes, build resilience.

If the same alerts keep firing for good reasons, either your team will be doing nothing but checking for issues or beginning to treat them as noise. It's important to understand the root causes of your issues and go beyond making simple repairs or surface fixes.

It's possible you have a resilience issue or a complex bug. Consider how your team should be reviewing, learning and finding ways to make the system self-healing or correcting.

Reacting to Live Alerts is not chore work.

Managing your live service should not be a chore. It's a key point of experience, learning, and assistance. It should be at the heart of what you do. A great team attitude to monitoring can really help with that.

credits

Cover Photo by Markus Spiske on Unsplash